Expert Analysis Assessment

Expert Analysis Assessment services are designed for organisations that produce non-academic expert analysis: think-tank publications, policy papers, risk analysis, strategic commentary, consultancy outputs, expert essays, analytical magazines, and other public- or client-facing analytical texts.

The purpose is not to summarise what these texts say, but to assess how they work as analytical objects: how arguments are built, how reasoning develops, how evidence functions, how values shape diagnosis, how conclusions are formed, and where analytical quality can be strengthened.

The EAA service stream connects structured assessment, institutional interpretation, tailored development, software-supported review, and long-term analytical system-building. It is built around the Expert Analysis Assessment module of Analysis Typology Coder and the Unified Analytical Coding Protocol with Addenda I–XV.

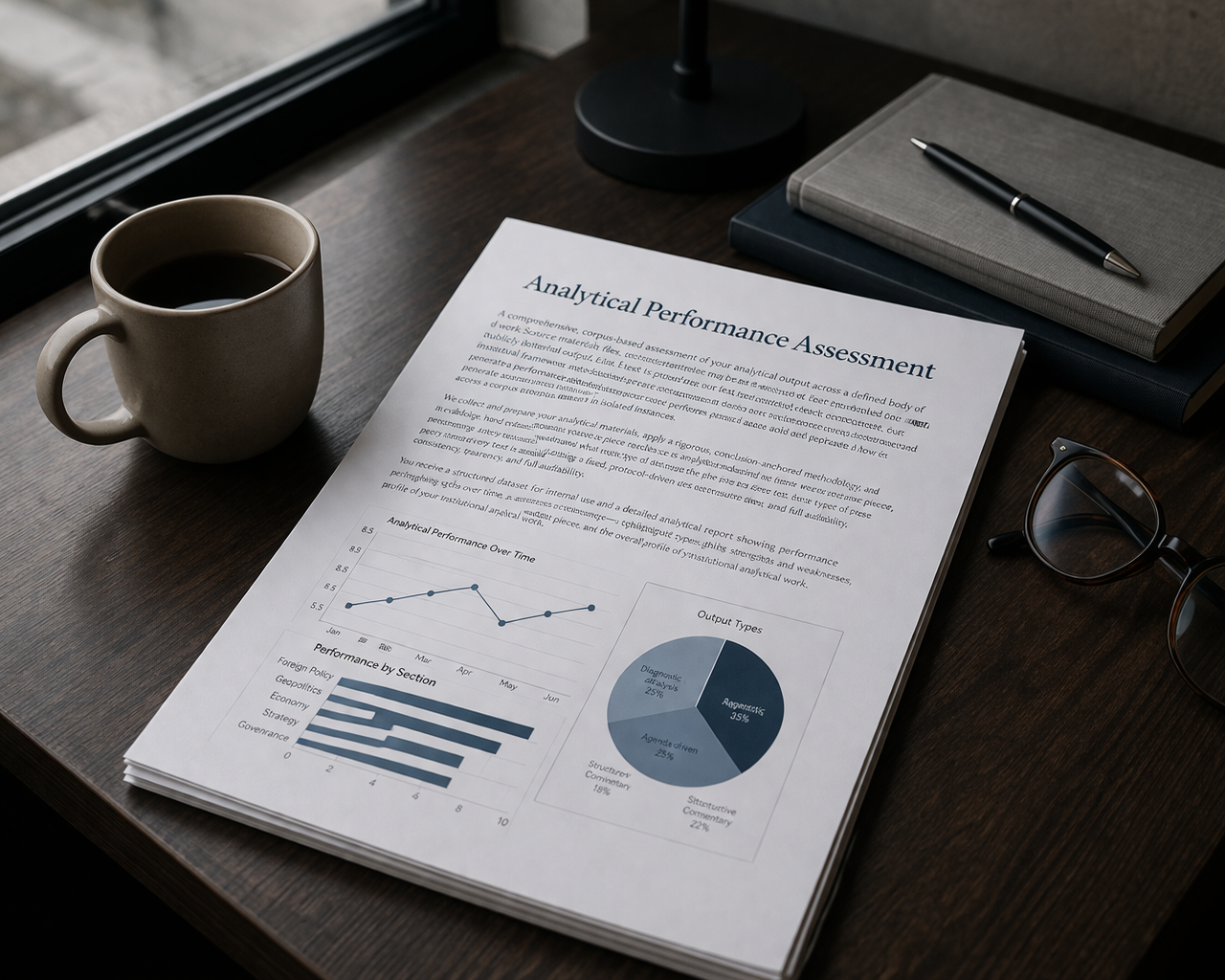

Analytical Performance Review

Using the Expert Analysis Assessment module of Analysis Typology Coder, we assess each output as a structured analytical object. The review examines how the argument is built, how reasoning develops, how evidence functions, how conclusions are formed, and where analytical strength or weakness actually lies.

The review is conclusion-anchored. It does not classify texts merely by topic, tone, or policy position. It identifies the final analytical closure of the text and assesses how the article’s own reasoning, evidence, values, uncertainty, and explanatory object operate in relation to that closure.

The review examines:

Reasoning Autonomy — whether the publication’s reasoning genuinely constrains, pressures, or reformulates its own claim.

Axiology Autonomy — whether diagnosis remains analytically separable from normative commitments.

Argumentative category — whether the output is best understood as diagnostic analysis, agenda-driven analysis, interpretive commentary, or structured opinion.

Substantive type — the explanatory object organising the text, such as foreign policy, strategic, security, geopolitical, institutional, procedural, political-economic, ideological, identity, legitimacy, coalition, leadership, or governance analysis.

Evidence architecture, uncertainty, confidence, and validation — how evidence functions, where ambiguity remains, whether boundary alternatives are active or resolved, and how robust the coding is.

This allows us to identify, across a body of work:

what kind of analysis your organisation produces most often;

where analytical reasoning is strongest;

where conclusions remain compressed, under-integrated, or weakly disciplined;

where normative preference begins to structure diagnosis;

which explanatory objects dominate across teams, themes, or publication formats;

which cases require anchor review, recalibration, or closer methodological inspection.

The review produces:

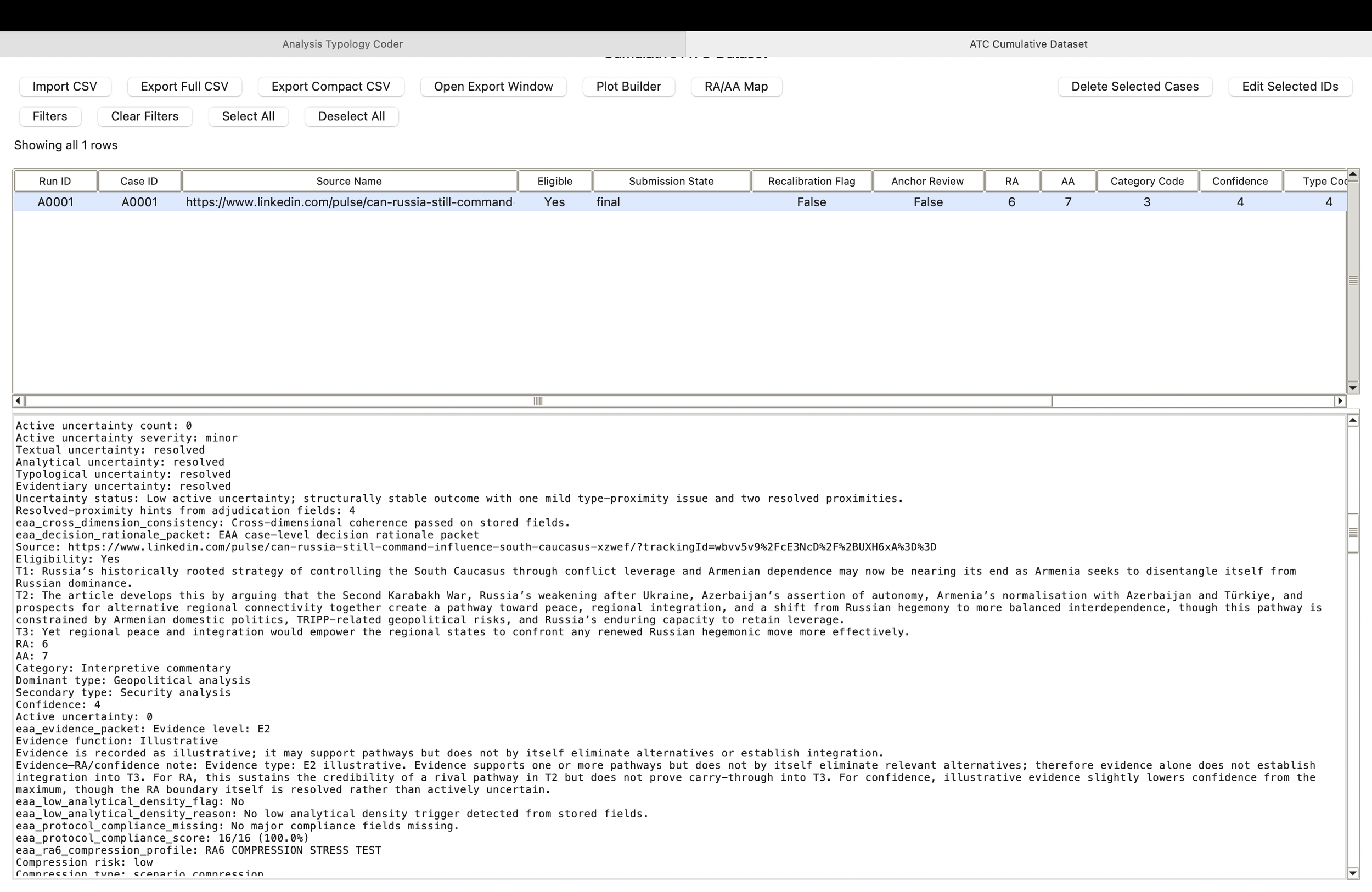

a structured analytical-performance dataset;

an interpretive analytical profile report;

comparative and visual outputs for internal discussion;

review flags, threshold observations, and development priorities;

a basis for future monitoring, training, editorial improvement, and institutional learning.

This is not a general impression of quality. It is a structured diagnosis of how your institution’s analytical work is built.

-

Structured review of your organisation’s expert analytical output across a body of publications, formats, authors, teams, or institutional portfolios.

-

A structured performance dataset and an analytical report interpreting key patterns, strengths, weaknesses, threshold positions, confidence issues, and areas for development.

-

A clear institution-level understanding of analytical performance over time, a foundation for internal monitoring, and an evidence base for targeted development work.

“What cannot be seen systematically cannot be improved strategically.”

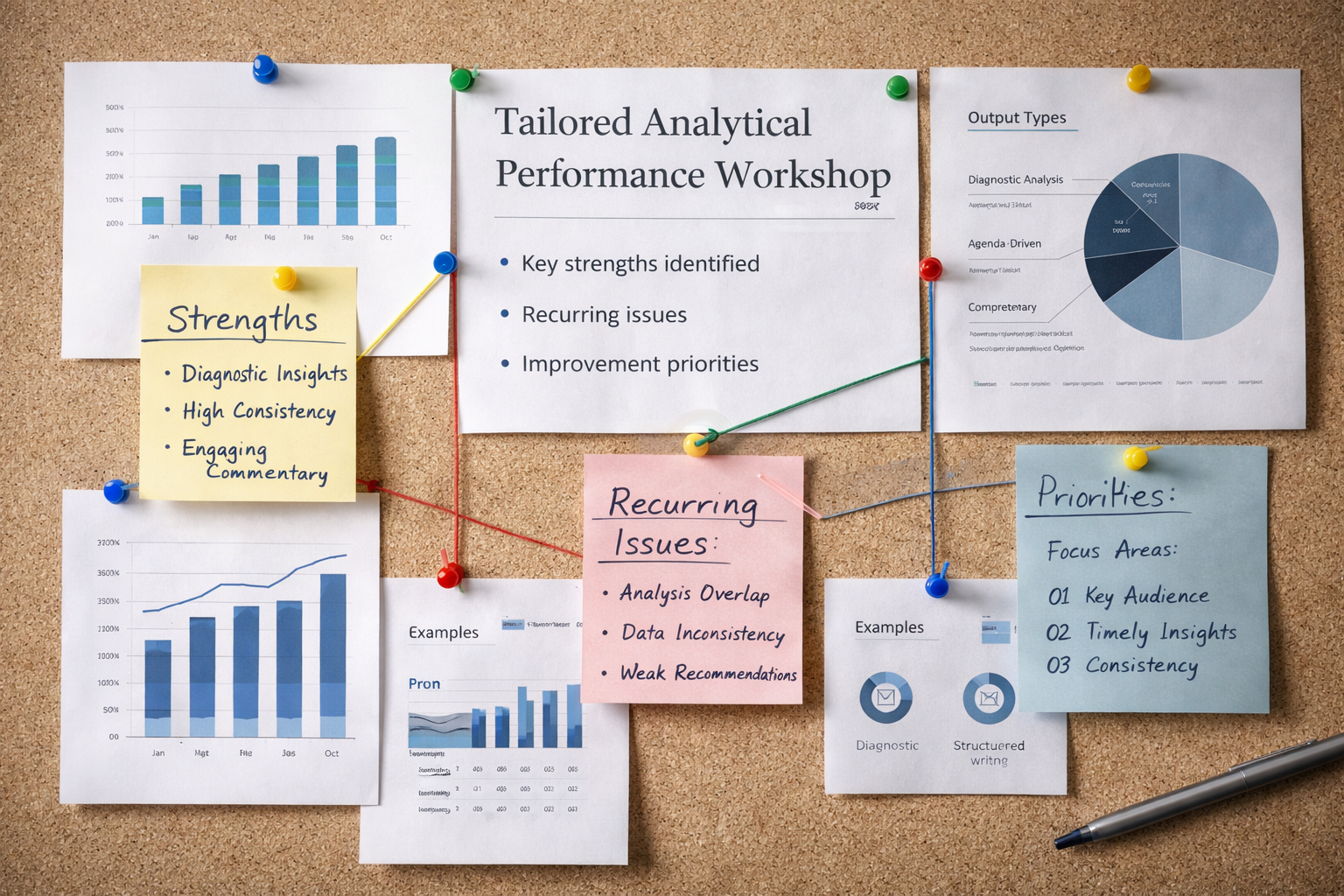

Tailored Analytical Development Workshop

A bespoke workshop built directly around your organisation’s own analytical output.

Rather than offering generic training, this workshop is designed in response to the findings of the EAA Analytical Performance Review. Using the evidence generated through Analysis Typology Coder, it focuses on the actual strengths, weaknesses, recurring patterns, threshold issues, and developmental needs visible in your publications.

The workshop can address, for example:

recurring weaknesses in analytical reasoning;

under-integrated or weakly disciplined conclusions;

weaknesses at the RA 5/6 or RA 6/7 boundary;

evaluative framing and its effect on diagnosis;

imbalance between explanation, evidence, and closure;

uncertainty handling and confidence calibration;

substantive-type confusion, such as security versus geopolitical or strategic versus foreign-policy analysis;

variation in performance across teams, sections, authors, or publication formats;

the differences between stronger and weaker exemplar texts within your own corpus.

This makes the workshop practical and institution-specific. It does not begin from abstract ideals of good analysis, but from the concrete analytical profile of your organisation’s work.

The workshop helps participants:

understand where patterns of strength and weakness lie;

see why those patterns matter for analytical quality and institutional credibility;

identify what stronger analytical practice looks like in concrete terms;

translate assessment findings into clearer standards for future work;

build shared internal vocabulary for reasoning, evidence, normativity, uncertainty, and analytical closure.

-

A bespoke workshop designed in direct response to the findings of the EAA Analytical Performance Review.

-

A targeted workshop programme built around your institution’s analytical profile, supported by examples, practical reflection, and applied exercises.

-

Stronger institutional learning, clearer understanding of recurring analytical tendencies, and more focused improvement in future analytical practice.

“The most effective analytical development begins where your own patterns become visible.”

Core Analytical Development Workshop

Unlike the tailored workshop, this offering does not begin from a prior assessment of your organisation’s output. It introduces the conceptual and operational framework behind Expert Analysis Assessment and can be delivered as a standalone training, as part of a longer-term development programme, or alongside the Analytical Performance Review and Tailored Analytical Development Workshop.

The workshop focuses on the structure of analysis itself. It examines how arguments are built, how reasoning constrains conclusions, how evaluative commitments interact with analytical judgement, and how different forms of analysis differ in logic, purpose, and quality.

It also trains participants to distinguish substantive types according to the political object organising the explanation, not according to loose topic labels. This is especially important in fields where terms such as foreign policy analysis, security analysis, strategic analysis, and geopolitical analysis are often used interchangeably.

The workshop covers, in practical terms:

how analytical arguments are formed;

how reasoning disciplines or fails to discipline conclusions;

how evidence functions as constraining, illustrative, or decorative;

how evaluative commitments shape diagnosis;

how to distinguish stronger and weaker analytical closure;

how the four argumentative categories differ in structure;

how substantive types should be differentiated according to the object under analysis;

how uncertainty, confidence, and resolved proximity should be understood;

how these distinctions can be applied in day-to-day analytical production.

-

A comprehensive workshop introducing the framework, criteria, and logic of analytical work, with emphasis on how analysis is structured, assessed, and strengthened in practice.

-

A structured training session built around core analytical concepts, practical examples, and analytical decomposition exercises.

-

A shared understanding of analytical standards, stronger day-to-day analytical practice, and a more consistent analytical culture across teams.

“Strong analysis is not an instinct; it is a structure that can be learned.”

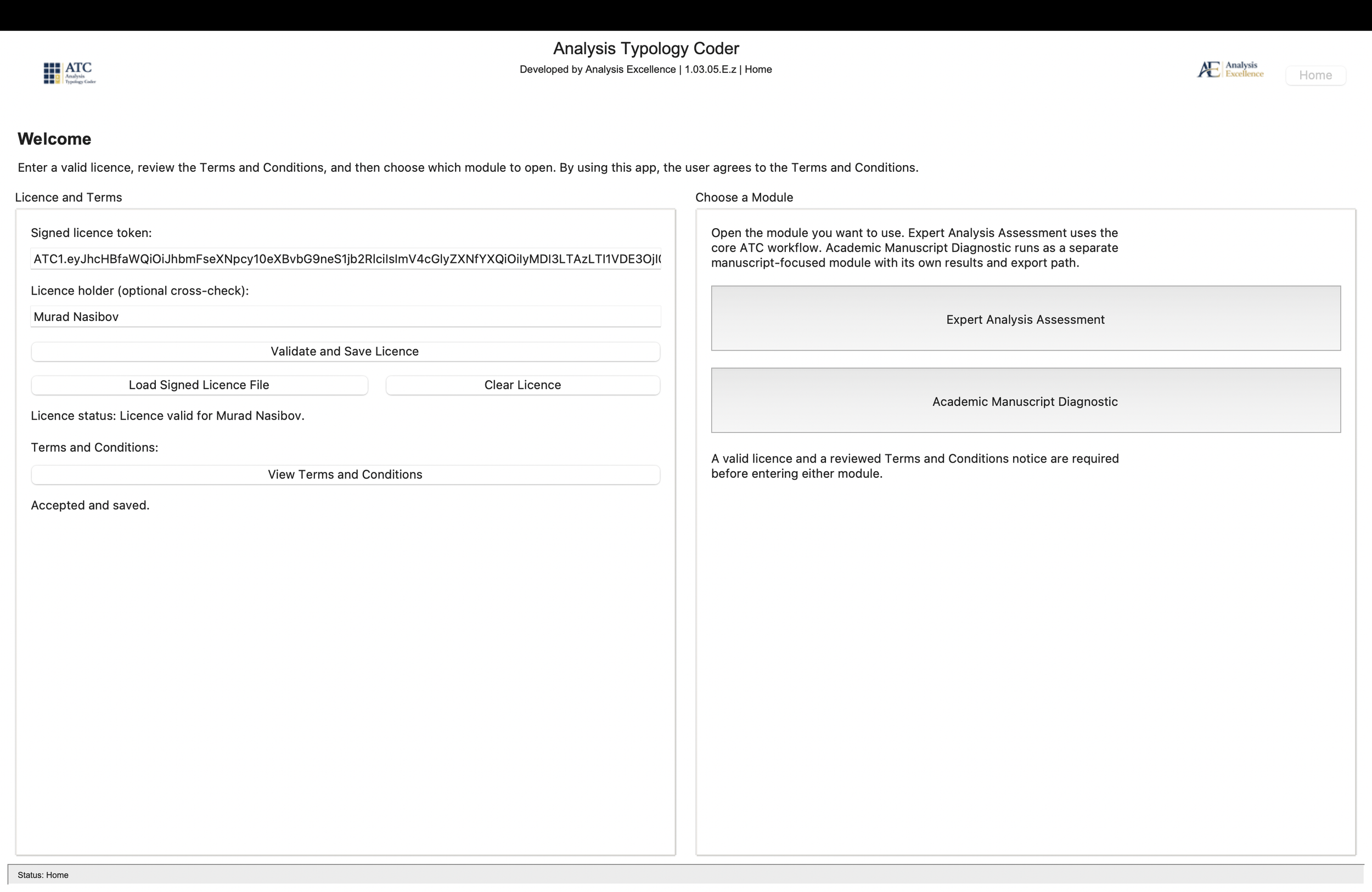

Analysis Typology Coder | Expert Analysis Assessment

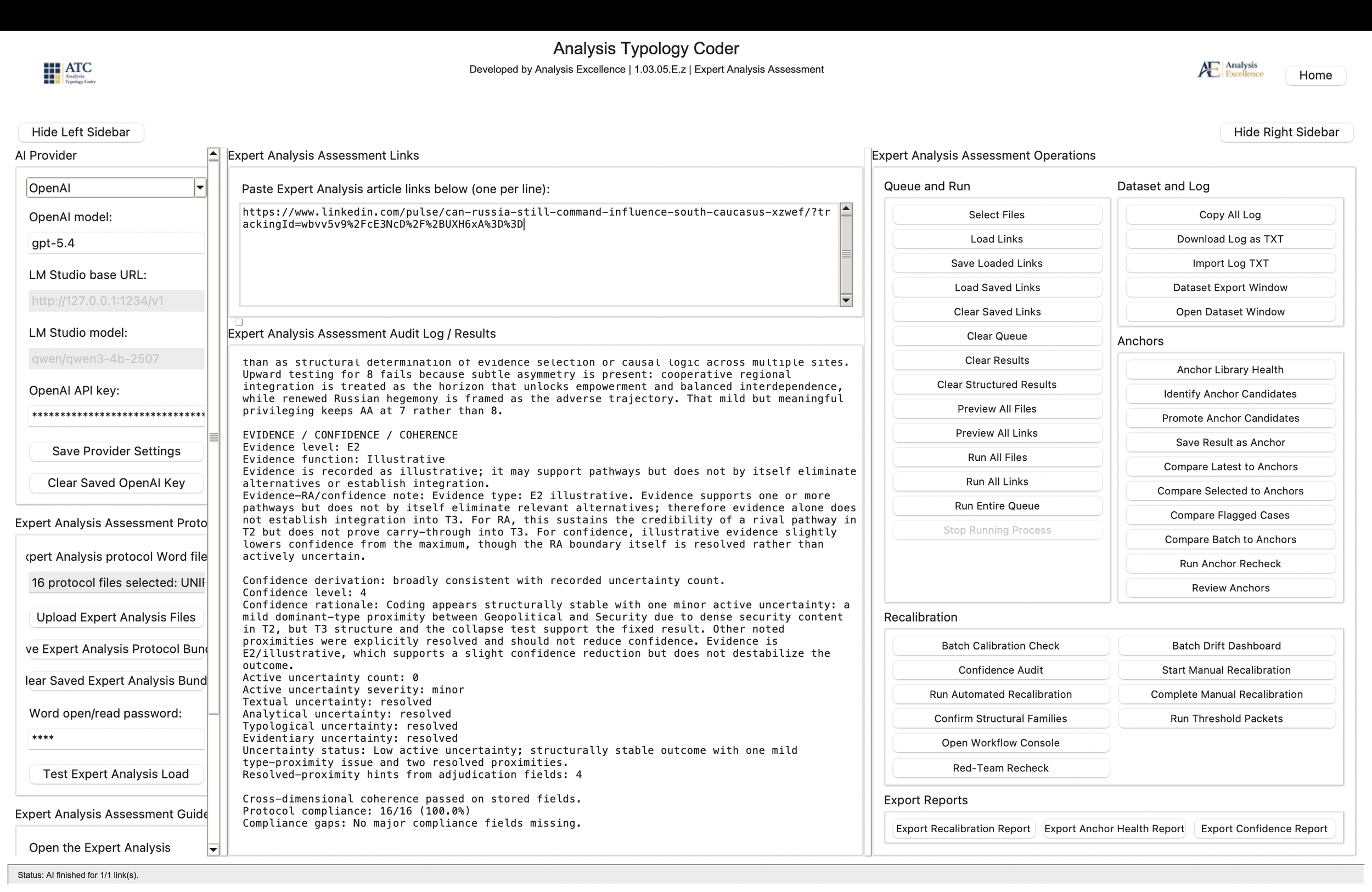

A specialised software-supported environment for the structured assessment of expert analytical texts.

The Expert Analysis Assessment module of Analysis Typology Coder is built on our proprietary conceptual framework, methodology, and operational protocols. It is not a generic AI writing, sentiment, topic-classification, or summarisation tool. Its purpose is more specific: to make the assessment of expert analytical work more systematic, transparent, reproducible, and institutionally usable.

EAA is designed for organisations that produce non-academic expert analysis, including:

think-tank publications;

policy papers;

risk analysis;

strategic commentary;

analytical magazine pieces;

consultancy outputs;

expert essays;

other forms of professional analytical writing.

For these texts, EAA applies a rule-bound assessment protocol in order to examine how the analysis is actually built. It assesses:

the structure of the argument;

the relationship between opening claim, developed reasoning, and final conclusion;

the extent to which reasoning genuinely constrains the conclusion;

the relationship between diagnosis and evaluative commitments;

the role of evidence in supporting or constraining the argument;

the type of analysis being produced;

the confidence, uncertainty, and review status of the result;

recurring patterns across a wider body of institutional output.

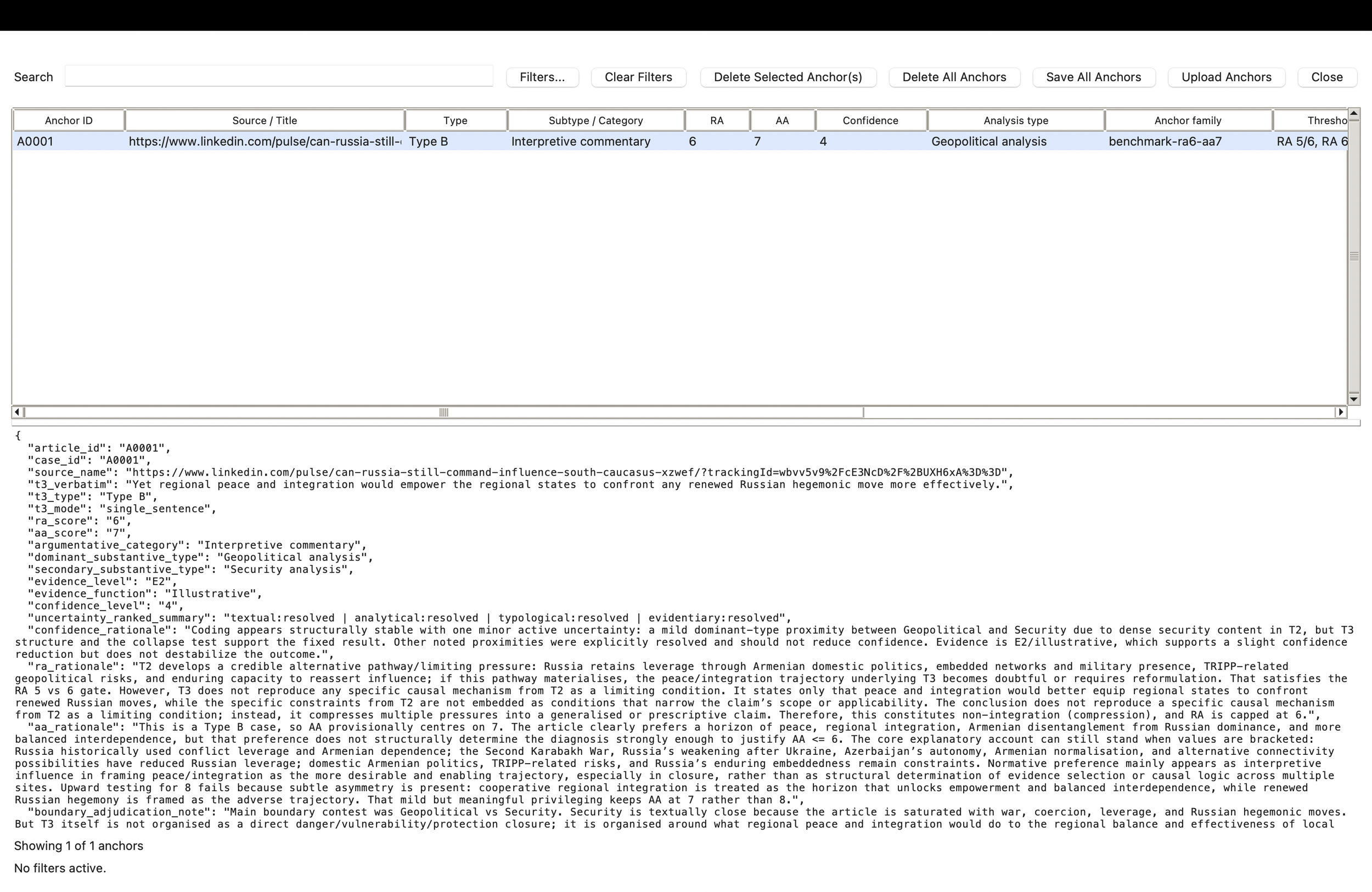

At the centre of the EAA module is a conclusion-anchored methodology. The software does not assess texts merely by topic, tone, or surface quality. It identifies the final analytical closure of a text and evaluates how reasoning, evidence, values, uncertainty, and explanatory object operate in relation to that closure. This makes it possible to move beyond impressionistic reading and loosely defined editorial judgement. Texts are assessed through a consistent, auditable, and methodologically grounded structure.

One of the software’s central strengths is that it does not merely produce one-off textual feedback. It creates structured analytical data. This allows organisations to detect recurring patterns, compare outputs systematically, maintain benchmark cases, identify threshold issues, support internal review, and generate reports for editorial, managerial, or strategic use.

EAA is particularly valuable for institutions whose analytical publications form part of their professional, public, or client-facing profile. It provides different forms of value to different users:

for analysts and researchers — a structured environment for reviewing outputs, understanding analytical standards, and tracking development;

for editors and managers — a clearer basis for supervising quality, identifying recurring weaknesses, and improving consistency;

for institutional leadership — a stronger foundation for decisions on training, recruitment, editorial direction, quality assurance, and long-term analytical development.

EAA is not designed to replace judgement. It is designed to support judgement with structure. Human review remains essential, especially in boundary cases, but the software gives that review a clearer methodological basis, a structured dataset, and an auditable trail.

Licensing note

The Expert Analysis Assessment module can be licensed individually as a standalone module within the ATC app. Institutions that also work with academic manuscripts may license EAA together with the Academic Manuscript Diagnostic module in one integrated ATC installation. Organisations focused only on expert analytical outputs do not need to license AMD.

Licensing can be accompanied by onboarding, user guidance, and training for methodologically consistent use. Where appropriate, software access may follow an initial Analytical Performance Review, so that clients first become familiar with the framework, methodology, and interpretation of results. In other cases, module licensing and training can be arranged directly according to institutional needs.

-

Licensed access to the Expert Analysis Assessment module of Analysis Typology Coder, with onboarding and training for methodologically consistent use. Optional implementation support can cover dataset interpretation, audit-log reading, internal review routines, anchor-case management, recalibration practices, and reporting.

-

A licensed assessment system with structured results, cumulative datasets, exportable reports, audit logs, review tools, benchmark-anchor functions, recalibration features, user guide, and training.

-

More consistent, reproducible, and auditable assessment of expert analytical work; stronger internal monitoring capacity; clearer review and development routines; and a documented basis for long-term analytical improvement.

“Rigour becomes sustainable when judgement is supported by structure.”

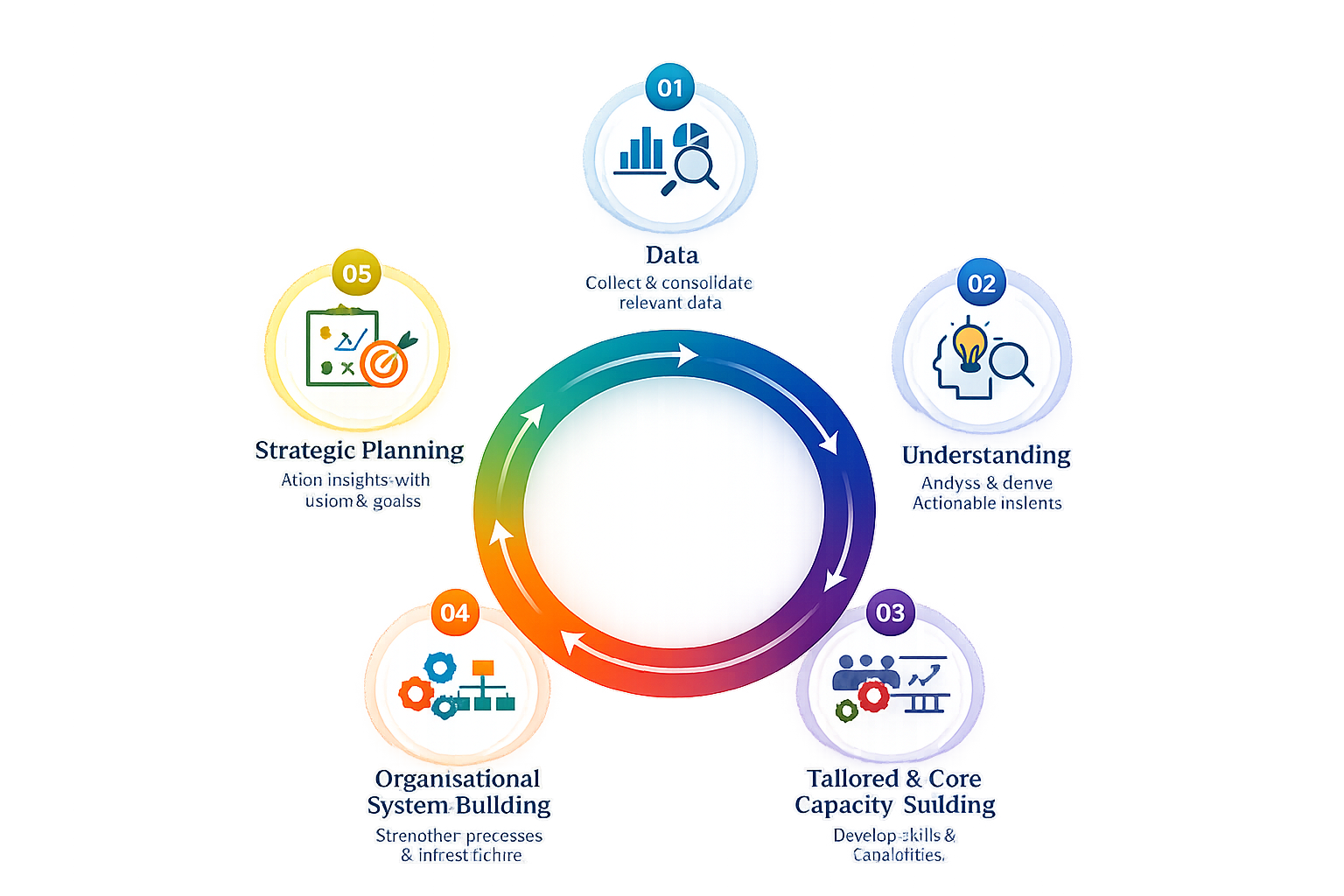

Analytical Excellence Partnership

A long-term institutional partnership for organisations that want to lead analytical quality strategically, not only assess it periodically.

While our reviews, workshops, and software focus on the assessment and development of analytical work at the level of texts, teams, and workflows, the Excellence Partnership operates at the level of institutional strategy, leadership, and organisational direction. It is designed for organisations that want to treat analytical performance not as an occasional concern, but as a structured institutional capability.

The partnership supports leadership in building the conditions for sustained analytical excellence. This may include:

defining internal analytical standards

developing performance indicators for analytical output

designing internal evaluation and review procedures

building frameworks for long-term monitoring and comparison

interpreting performance data for strategic decision-making

aligning analytical development with institutional goals, reputation, and positioning

Its purpose is to help organisations move from isolated assessment and training towards a continuous and strategically guided approach to analytical performance.

Depending on institutional needs, the partnership may include:

regular analytical performance reviews

longitudinal interpretation of assessment data

advisory support on analytical development strategy

executive-level discussions on standards, priorities, and organisational direction

support in embedding analytical evaluation into internal systems and routines

This offering is particularly relevant for organisations whose analytical output is a core institutional product and whose credibility, influence, and reputation depend on its quality, consistency, and development over time.

The Excellence Partnership is therefore not a single service, but an ongoing cooperation through which analytical excellence becomes an institutional function: measured, interpreted, developed, monitored, and strategically led.

Taken together, our offerings form a continuous cycle:

analytical output is assessed systematically

strengths and weaknesses are interpreted clearly

teams are trained on this basis

internal systems are strengthened

leadership translates this into standards, direction, and sustained development

The Excellence Partnership supports this final stage: turning assessment, training, and systems into long-term institutional capability.

-

A long-term institutional cooperation supporting leadership in designing, implementing, and steering structured systems for analytical performance monitoring and development.

-

Strategic advisory support, regular performance reviews, guidance on standards and indicators, internal evaluation design, and leadership-level interpretation of analytical performance data.

-

Analytical excellence becomes an institutional capability rather than a one-off exercise, supported by clearer standards, stronger oversight, and sustained long-term development.

“Analytical excellence becomes durable when it is built into the institution, not left to individuals alone.”