Our Methodology

Our work is based on a structured methodological framework developed specifically for the evaluation and development of analytical performance. The framework was designed to address a problem that many organisations face but rarely measure systematically: how analytical work actually performs over time, across authors, teams, and thematic areas, and how analytical quality can be understood, developed, and managed in a structured way.

The methodology combines a conceptual framework, a set of analytical criteria, a structured evaluation procedure, and a dedicated software tool. Together, these elements make it possible to evaluate analytical work in a consistent and comparable way, to generate performance data, and to translate this data into practical analytical development and institutional strategy.

At the core of the methodology is the idea that analytical work can be assessed systematically if clear criteria, consistent evaluation procedures, and structured comparison are in place. Rather than relying on general impressions or occasional editorial judgement, the methodology makes it possible to evaluate analytical work across time, across teams, and across types of analytical output, and to identify patterns of strengths, weaknesses, and development.

The methodology focuses in particular on the analytical structure of texts: how arguments are developed, how evidence is used, how claims are supported, how normative and analytical elements are combined, and how conclusions are derived. This makes it possible not only to assess whether a text is informative or well written, but whether it is analytically strong, analytically balanced, and analytically consistent.

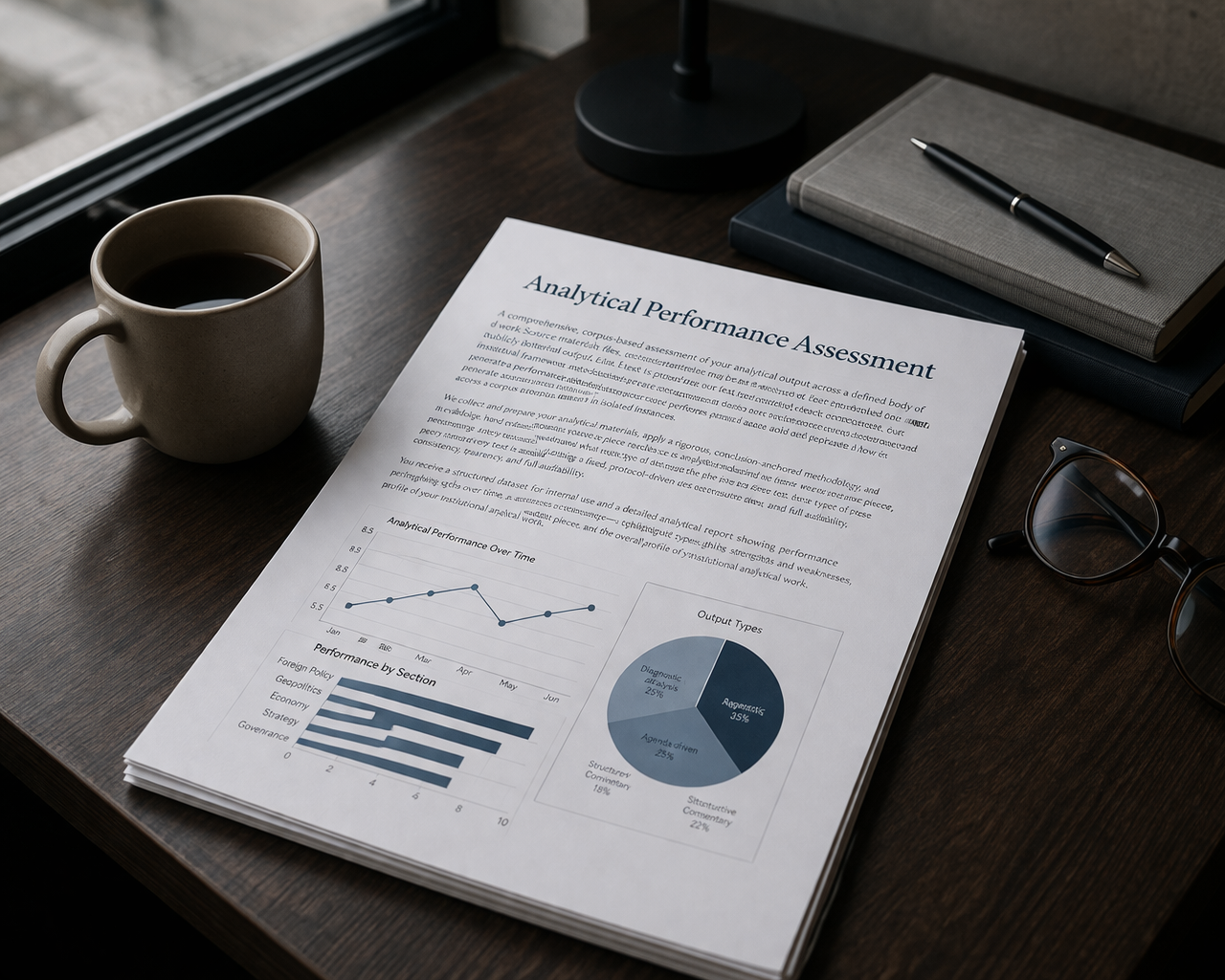

A key feature of the methodology is that it produces structured data. Each evaluated text becomes part of a dataset that allows organisations to see patterns over time: which types of analysis are strongest, where recurring weaknesses appear, how analytical performance develops, and how different teams or thematic areas perform. In this way, analytical work becomes measurable and comparable without reducing it to simplistic metrics.

The methodology is implemented through a dedicated software tool developed specifically for this purpose. The software allows analytical pieces to be processed in a structured way, stores results in a dataset, and enables systematic comparison across time and across analytical outputs. This makes it possible to move from one-time evaluation to continuous analytical performance monitoring.

Overall, the methodology is designed to connect four levels that are often treated separately: the level of individual analytical texts, the level of analysts and teams, the level of organisational performance monitoring, and the level of institutional strategy. By connecting these levels, the methodology makes it possible not only to evaluate analytical work, but to develop analytical capacity and to manage analytical performance as an institutional function.

For this reason, the methodology is not only an evaluation tool. It is a framework for understanding, developing, and leading analytical performance over time.

-

Our methodology is built on a conceptual distinction and interaction between reasoning autonomy and axiology autonomy.

-

This approach combines qualitative judgement with systematic procedure, ensuring reliability, validity, reproducibility, and auditability of results. The aim is not automated scoring, but structured analytical evaluation supported by software.

-

Our work follows a continuous analytical development cycle:

Data → Understanding → Tailored & Core Capacity-Building → Organisational System-Building → Strategic Planning